Introduction

When facing a surgical procedure, it is common for patients to have questions regarding details of their procedure, recovery and/or the rehabilitation process. While some patients may already possess background knowledge on their surgery, many will not and may be undergoing a procedure for the first time in their life. Even worse, many patients commonly refer to misleading sources about their diagnosis and treatment options, which can lead to confusion and obligate the care giver to explain fact from fiction. When examining where patients seek out health information, much of the time their searching is centered around commercial websites or search engines as opposed to academic or scholarly journals (LaValley, Kiviniemi, and Gage-Bouchard 2017). With the surge of information regarding orthopedic injuries on social media, patients may even resort to platforms such as Facebook, X (formerly Twitter), Instagram, or Tik Tok as a means to answer unmet clinical questions that may arise (Hasley, Bukowiec, Zaifman, et al. 2023). With the recent surge of artificial intelligence (AI) into mainstream use, it begs the question, how can this new technology, such as the widely available platform ChatGPT or Google Gemini, be utilized in medicine?

ChatGPT in particular is an AI platform that is constructed so that the user can propose questions to the system and receive a response in a conversational manner. ChatGPT formulates its response by analyzing all public, free information available on the internet including but not limited to, books, websites, and articles and forming an artificial neuron network to provide a reply in a humanistic manner (Cascella et al. 2023). The system is able to go back and forth with the user, answering follow up questions and clarifying information while fostering an on-going dialogue. Similarly, Google’s Gemini, another prominent and free AI platform, offers comparable capabilities leveraging its extensive access to information to provide real-time answers to user queries. With both platforms available for public use, it raises the question of how these technologies might assist in answering common medical questions, particularly in the context of surgery and rehabilitation.

In the field of medicine ChatGPT has been proven effective at answering general medical queries (Johnson, Goodman, Patrinely, et al. 2023). In addition to this ChatGPT demonstrated that it was capable of performing at or near the threshold needed to pass a National Board of Medical Examiners (NBME) style exam and was equated to that of a third year medical student (Kung, Cheatham, Medenilla, et al. 2023; Gilson, Safranek, Huang, et al. 2023). Furthermore, researchers demonstrated that AI chatbot responses may even be superior to those of a licensed medical provider. One study demonstrated that the responses from an AI chatbot to general medical questions were actually preferred over those of a verified medical provider when being evaluated by other medical professionals (Ayers, Poliak, Dredze, et al. 2023). Studies evaluating Google’s Gemini have shown comparable results to ChatGPT in terms of accuracy when answering medical questions, including those related to pediatric orthopaedics (Pirkle, Yang, and Blumberg 2024). However, occasional discrepancies were noted, particularly in Gemini’s citations, where several referenced studies were found to contain errors or were unverifiable (Pirkle, Yang, and Blumberg 2024).

AI has exceeded what was previously thought possible with respect to internet chat systems The success of AI systems and their easy accessibility raise the question, are patients using AI to answer their medical questions? For orthopaedics, there are a number of questions that a patient may have, particularly in regard to potential surgical procedures. Anterior cruciate ligament reconstruction (ACLR) is a common orthopaedic procedure that is often performed on younger patients (Leathers et al. 2015). This information, coupled with the knowledge that younger patients often turn to online resources for health related information, creates the need to evaluate online health resources (Jia, Pang, and Liu 2021). The aim of this study is twofold: (1) to evaluate whether ChatGPT and Google’s Gemini can be relied upon as a resource for patients to access accurate information regarding preoperative, intraoperative, and postoperative events in relation to common orthopaedic procedures, particularly ACLR; and (2) to compare the accuracy of information provided against each other. Previous studies have analyzed the usability and accuracy of ChatGPT for various orthopedic conditions. To our knowledge no prior studies have comparatively evaluated AI platforms against each other for accuracy and comprehension regarding ACLR.

Methods

Authors formulated thirteen potential patient questions that may arise prior to ACLR. Each question was then reviewed by a group of five board-certified orthopedic surgeons and their validity was confirmed for inclusion. Questions were then entered into both ChatGPT 3.5 and Google’s Gemini (Table 1). Responses were recorded and de-identified so that the AI system of origin was not discernible. The questions, along with the de-identified answers, were then independently evaluated by the same group of board-certified orthopedic surgeons. A four-point scale, with a score of one representing an excellent response and four indicating a need for major clarification, was used to grade each AI system’s response (Li et al. 2024; Johns et al. 2024). Additionally, the surgeons indicated with a ‘yes’ or ‘no’ whether they believed each AI system’s response would be helpful to patients. For example, one response to the question “Do I need ACL reconstruction surgery?,” ChatGPT responded with "However, whether or not you need ACL (anterior cruciate ligament) reconstruction surgery depends on various factors including the severity of your injury, your activity level, your age, and your overall health. If you have experienced an ACL tear, it’s essential to consult with a qualified orthopedic surgeon or sports medicine specialist. They will assess your condition through physical examination, imaging tests (such as MRI), and discussion of your symptoms and lifestyle. Based on their evaluation, they can recommend the most appropriate treatment plan, which may or may not involve surgery. In some cases, particularly if you’re not highly active or if the tear is partial and your knee remains stable, conservative treatment such as physical therapy and bracing may be sufficient. However, if you’re an athlete or if the tear is severe and causing instability in your knee, surgery may be recommended to reconstruct the torn ACL and restore stability to the joint.

Ultimately, the decision to undergo ACL reconstruction surgery should be made in consultation with a qualified medical professional who can provide personalized advice based on your individual circumstances." which all reviews marked as “yes.” This indicated that the surgeons agreed with this response and they thought the response would be overall helpful for the patient to read. Table 2 shows examples of responses from both ChatGPT and Gemini showing the difference in how each AI answers the same question. For the first question all but one surgeon found ChatGPT’s answer useful while all surgeons found Gemini’s answer useful. However, for the second question, three of the surgeons found ChatGPT’s answer not useful while all surgeons found Gemini’s answer useful.

Each response was also evaluated for readability. The reading level of the ChatGPT and Google Gemini responses were calculated using the WordCalc software package, and readability was assessed using the Flesch-Kincaid (FK) grade level, FK reading ease score, Simple Measure of Gobbledygook index (SMOG), Coleman-Liau index (CL), Gunning fog index (GF), and automated readability index.

Results

Both systems provided responses to all 13 (100.00%) and utilized what was determined to be logical reasoning without providing information that contraindicated itself. Additionally, 100% of the responses provided by both systems included a disclaimer to discuss further medical information with either a “medical team”, “physical therapist”, and/or “surgeon”.

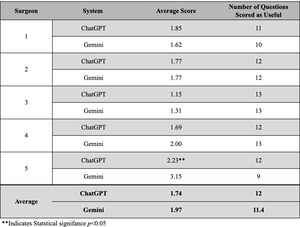

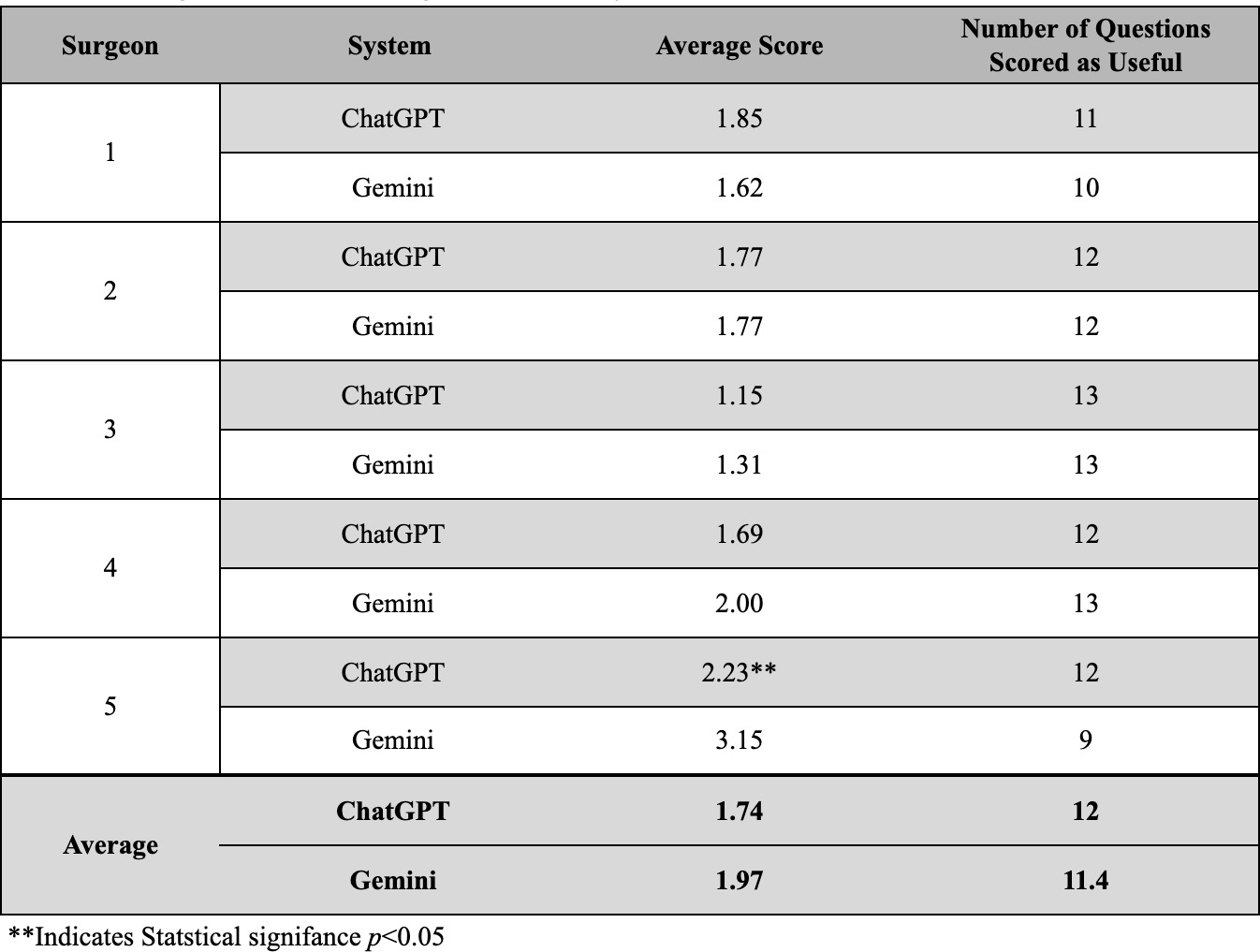

When comparing the average scores for each question, ChatGPT had a lower average score, indicating a better response, on 9 of the 13 questions analyzed. ChatGPT’s lowest average score was on question 6 at 1.0, while Gemini’s lowest average score was for question 5 at 1.4. ChatGPT’s highest average score appeared on question 5 at 3.2, whereas Gemini’s highest average score was for question 1 at 2.8. Significant differences between the average score on questions 1 and 5 were noted (p<0.01), while other questions demonstrated comparable performance between ChatGPT and Gemini. Across all thirteen questions, three of the surgeons scored ChatGPT with lower scores on average compared to Gemini (Table 3). When comparing the average scores provided to ChatGPT and Gemini responses provided by each surgeon across the entire question set, surgeon 5 was noted to score ChatGPT responses significantly better (2.23 vs 3.15; p=0.021) (Table 3).

Overall, ChatGPT responses were deemed useful an average of 12 times by each surgeon, while Gemini responses were rated useful 11.4 times on average (Table 3). Surgeon 3 rated the highest number of ChatGPT responses as useful, with 13 out of 13 rated “yes,” while Surgeon 1 gave the fewest “yes” ratings for ChatGPT at 11 (Table 3). For Gemini, Surgeon 3 also rated all responses as useful (13 out of 13), while Surgeon 5 found the fewest Gemini responses useful, with only 9 “yes’” ratings (Table 3).

ChatGPT

ChatGPT responses demonstrated variability across questions and surgeons. With respect to each surgeon’s score on the set of responses the average ranged from 1.15 to 2.23, with surgeon one scoring an average of 1.85 (SD = 1.07), surgeon two 1.77 (SD = 0.83), surgeon three 1.15 (SD = 0.38), surgeon four 1.69 (SD = 0.63), and surgeon five scoring 2.23 (SD = 0.83). ANOVA analysis revealed significant variability in scores for responses to the same question (F = 4.13, p = 0.00016). Significant variability was also found in scores assigned by different surgeons across all responses (F = 3.18, p = 0.0196).

Gemini

When analyzing the scores assigned to each response by each surgeon, the average ranged from 1.31 to 3.15. Surgeon one averaged 1.62 (SD = 0.87), Surgeon two 1.77 (SD = 0.73), Surgeon three 1.31 (SD = 0.48), Surgeon four 2.00 (SD = 0.71), and Surgeon five 3.15 (SD = 0.69). ANOVA revealed no significant variability in scores for responses to the same question (F = 1.28, p = 0.26). Significant variability was found in scores assigned by different surgeons across all responses (F = 13.11, p < 0.001).

Readability

ChatGPT’s Flesch-Kincaid (FK) Reading Ease score was 23.5, while Gemini scored 43.27 (p < 0.001). ChatGPT’s New Dale Chall (NDC) Score was 8.19, compared to Gemini’s 7.27. Both systems recorded an Spache Readability (SR) Score of 5. FK Grade Level scores were 12 for ChatGPT and 11.06 for Gemini (p < 0.001). Gunning Fog (GF) Index scores were 18.65 for ChatGPT and 14.92 for Gemini, with both systems recording Coleman-Liau (CL) scores of 12. SMOG index values were 0.83 for ChatGPT and 0.63 for Gemini.

Discussion

The widespread availability of information has significantly impacted the medical field, providing patients with numerous resources to answer their questions. While the internet can serve as a valuable tool for education, it also introduces the risk of disseminating inaccurate or misleading information. AI represents one of the newest developments in information delivery, providing efficient access to knowledge. This study examines the potential role of ChatGPT and Google’s Gemini in the medical industry. Existing literature has explored how ChatGPT and Gemini may be utilized to assist in making diagnoses in a multitude of fields, including: ophthalmology, radiology, and emergency medicine (Madadi, Delsoz, Lao, et al. 2023; Delsoz, Madadi, Munir, et al. 2023; Berg, van Bakel, van de Wouw, et al. 2023; Srivastav, Chandrakar, Gupta, et al. 2023). The systems have also demonstrated their capabilities when completing multiple choice style board examination questions across multiple specialty exams (Madadi, Delsoz, Lao, et al. 2023; Delsoz, Madadi, Munir, et al. 2023; Kuroiwa, Sarcon, Ibara, et al. 2023; Bahir, Zur, Attal, et al. 2024).

The scores varied between surgeons with ChatGPT and Gemini with respect to ACLR related questions. ChatGPT outperformed Gemini on 9 of the 13 questions on average. The scores ranged from 1 (excellent response) to 4 (requiring major clarification). The surgeon evaluators felt ChatGPT responses required less clarification, representing clear and accurate responses. Variability was noted in the responses from surgeons on the same questions, as well as across the entire question set for ChatGPT responses. This variability was only shown to be significant between surgeons across the entire question set when analyzing Gemini responses. ChatGPT’s scores varied significantly both between different questions and between surgeons, suggesting its performance was influenced by the specific question content and individual surgeon preferences. These findings show that while both systems had variations in how their responses were scored, the patterns of these variations were different.

In addition to quantitative scoring, surgeons evaluated the utility of each response using a binary “yes” or “no.” ChatGPT’s responses were deemed useful an average of 12 times per surgeon, compared to Gemini, which was rated useful an average of 11.4 times. While ChatGPT may have provided technically superior answers to specific questions, Gemini still formulated responses that were perceived as helpful to patients.

The findings demonstrate AI’s ability to provide answers to questions that may be proposed by patients. As such, these new information resources may become useful tools for patients to learn about their orthopaedic procedure and the recovery associated with it. Alternatively, chat-based systems may provide false information. A proposed reason for providing false information is that some large language models (LLM), including ChatGPT have knowledge cut-off dates (OpenAI 2023a). Specifically ChatGPT’s cut-off date is October 2023, indicating that much of its knowledge base stems from prior to that date (OpenAI 2023a). While ChatGPT indicates to its user that it is able to search the web despite its knowledge cut-off, it is still possible the system may lack up to date resources. In contrast, Google’s Gemini does not possess a quoted cut-off date, representing a potential advantage over ChatGPT, however this is unclear.

While this current study sought to evaluate the ability of ChatGPT and Gemini to answer questions related to a procedure or diagnosis, literature exists that aimed to evaluate whether patients can reliably use AI systems to self-diagnosis common orthopaedic conditions (Kuroiwa, Sarcon, Ibara, et al. 2023). Kuroiwa et al. found ChatGPT and Gemini could provide answers to the medical questions posed, however, their accuracy and reliability were inconsistent (Kuroiwa, Sarcon, Ibara, et al. 2023). In this study, ChatGPT outperformed Gemini on 9 of 13 questions and received higher usefulness ratings from surgeons, averaging 12 “yes” responses compared to Gemini’s 11.4. The variability in surgeon evaluations highlights how individual interpretation impacts the assessment of AI responses. By including disclaimers urging users to consult healthcare professionals, both systems acknowledge these limitations and emphasize the need for expert oversight when using AI-generated medical information.

Readability analysis further distinguished the systems. Gemini obtained a higher FK Reading Ease score and a lower FK Grade Level score (11.06) compared to ChatGPT (12.00), indicating that Gemini’s responses were easier to read and required about one less year of education to understand. However, both systems presented information at a level far above the average American reading level of 7th/ 8th grade (Marchand 2017). The readability scores reinforce the notion that information provided by these LLMs, while useful to the appropriate audience, may be too complex for the average American to understand. This heightens the concern that the information patients access may be misunderstood or misinterpreted if it cannot fully be understood.

Further concern exists with respect to the use of LLMs and personal health information. ChatGPT states it can delete data however, it is not able to delete the question prompts (OpenAI 2023b). Open AI explicitly warns not to include any personal information in prompts (OpenAI 2023b). Additionally, Gemini retains your personal information even if you delete your account for “as long as reasonably necessary to provide services to you.” (Gemini 2024) Although medical history is not explicitly stated as the type of personal information they save, caution should be exercised. Patients may decide to include personal health details in their queries with ChatGPT or Gemini as a means to receive the most accurate response. This practice carries the risk of exposing sensitive health information due to the uncertain privacy safeguards of AI systems. It also raises ethical concerns regarding the use of user inputs to enhance the functionality of these systems.

Our investigation highlights that ChatGPT and Gemini can provide answers with relative accuracy to patients, however, this does not take into account a major factor: surgeon to surgeon differences. For instance it was found that operative time may vary based on facility and based on each specific surgeon (Glance et al. 2018; Strum et al. 2000). When looking at ACLR specifically, there are a multitude of different rehabilitation plans, each with their own specific phases (Nelson et al. 2021). Other factors can complicate rehabilitation as well such as whether the procedure is a primary repair or revision procedure. Revision procedures present an area for variation in protocol such as longer time in a brace and longer time to return to sport (Rugg et al. 2020).

Such variation reinforces the notion that specifics should still come from the surgeon or healthcare provider. This was reflected in the answers provided by ChatGPT and Gemini all containing some level of disclaimer. A disclaimer is reassuring, however, whether a patient will decide to reach out to their healthcare provider, or utilize the information provided by AI technology remains unknown. This then has the potential to introduce discordance into the relationship between the orthopaedic surgeon and patient if expectations are not met. This relationship is crucial to uphold due to the strong implications that expectations play on patient success and satisfaction. Conner-Spady et al. found that in total hip replacement (THR) and total knee replacement (TKR) patients, they were significantly more satisfied when expectations were met (Conner-Spady et al. 2020). This highlights how crucial it is for patients to address expectations with their surgeon prior to their procedure, not just AI sources.

Further studies should be performed to learn how patients use ChatGPT and Gemini to answer medical questions and how they then use this information. In addition, how physicians view AI resources and how they feel it affects the patient-doctor relationship needs to be investigated.

Limitations

This study was not devoid of limitations. The first major limitation was the fact that questions were formulated using research regarding patient expectations as opposed to information directly from patients. While surveying patients directly would provide more accurate questions that could be posed to ChatGPT, this would be unlikely to substantially change the response from the AI system. The other limitation is the fact that only one orthopaedic surgeon was involved in the process of validating patient questions and validity of the data collected from both ChatGPT and the literature search. In the future, a larger set of questions and more experts in the field would help to explore this question further.

Conclusion

ChatGPT and Gemini, among other similar artificial intelligence systems, have both garnered interest with incredible speed among medical professionals. These results clearly elucidate how ChatGPT and Gemini are viable resources for patients to obtain general information, however, this information lacks crucially specific details. While these findings are promising, they also underscore the importance of continued evaluation and refinement of AI models for medical use, particularly to ensure that they meet the diverse needs of healthcare professionals and patients. No orthopaedic procedure is exactly the same and no patient is exactly the same, therefore, patients should continue to consult their surgeon for specific details about their injury, surgery, and rehabilitation. While patients may continue to turn to online resources, it is important for the orthopaedic surgeon to understand patient expectations, whether they come from AI resources or not, as to not place strain on the surgeon-patient relationship. As the medical field adapts to these new technological advances, more work is needed to determine what role artificial intelligence may play and what impact it can have on patients and surgeons alike.